SonicSim: A Customizable Simulation Platform for Speech Processing in Moving Sound Source Scenarios

Abstract

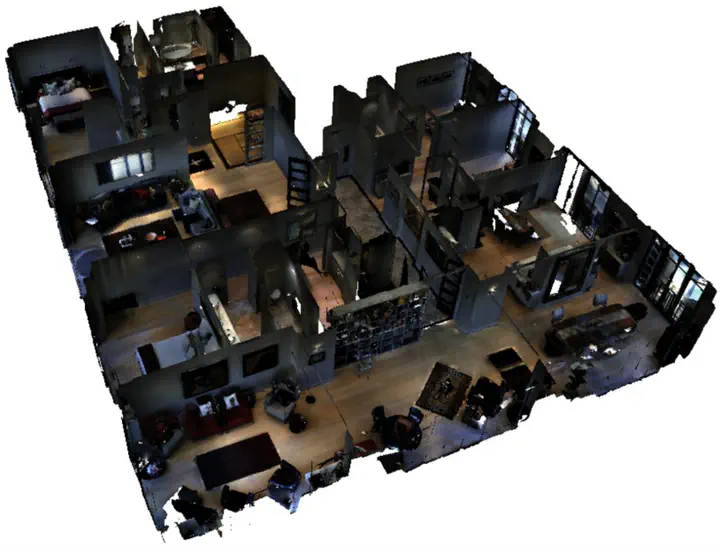

Systematic evaluation of speech separation and enhancement models for moving sound source scenarios requires large and diverse data, but real-world datasets are limited and synthetic datasets often lack acoustic realism. We present SonicSim, a customizable simulation toolkit built on Habitat-sim for generating high-fidelity moving-source audio data. SonicSim supports scene-level, microphone-level, and source-level control, enabling broad data diversity. Based on SonicSim, we build SonicSet with LibriSpeech, FSD50K, FMA, and Matterport3D scenes, and further record real-world references to analyze the synthetic-real acoustic gap. Results show that models trained on SonicSet generalize better to real-world data than existing synthetic alternatives.